When stereotypes and prejudices affect even AI: will full inclusivity ever be possible?

There are now countless studies highlighting a correlation between social media use and psychological vulnerabilities, ranging from self-dissatisfaction and lowered self-esteem to the onset of eating disorders. What remains largely underexplored, however, are the effects on the mental health of users who frequently engage with platforms that integrate AI. Like social media, these platforms also provide a representation of reality that is partial and at times incomplete, as they are trained on everything available online: vast databases composed of objective analyses and studies, but also opinions, comments, and thoughts expressed by individuals, and therefore entirely subjective. As a result, when AI platforms are required to respond to questions, it is not uncommon for them to display—through images, videos, and text—biases related to gender, ethnicity, social class, body weight, and sexuality. This represents a reiteration of the same stereotypes that already permeate social media.

To better understand the impact this phenomenon may have on the psychological health of frequent users of such tools, it is useful to recall what has emerged from the literature examining the relationship between internet use and mental well-being. For at least a decade, a direct correlation has been demonstrated between exposure to social media and low self-esteem, as well as negative perceptions of one’s body. Women, in particular, experience a constant comparison between themselves and the ostentatious perfection presented on social media, which can lead them to develop obsessive attitudes regarding their appearance. A 2022 study, The association between social media addiction and eating disturbances is mediated by muscle dysmorphia-related symptoms: a cross-sectional study in a sample of young adults, available on Springer Nature, showed that engaging with sometimes unattainable ideals of thinness and toning—referred to as “thinspiration” and “fitspiration”—can foster dysfunctional approaches to exercise and food, and may act as a trigger for eating disorders.

Idealized bodies, however, are not limited to women. According to researchers, individuals who identify as male are more likely to suffer from a perceived lack of muscle mass. As analyzed in the 2025 study Associations between muscularity-oriented social media content and muscle dysmorphia among boys and men, published in Body Image, muscle dysmorphia is a mental health condition characterized by the perception of insufficient muscularity and may lead to the use—sometimes without medical supervision—of supplements and anabolic substances. Once again, a central role is played by the aesthetic standards promoted by society and reflected in most media, which glorify strong, athletic, and muscular male bodies.

These same beauty standards also feed the knowledge of artificial intelligence. Within the vast pool of data from which software developers draw are the prejudices that, over time, have shaped the aesthetic, ethical, and behavioral parameters that individuals—depending on the gender they identify with—are expected to embody. Although this is a very recent phenomenon, some studies, such as Artificial Intelligence, Bias, and Ethics, a 2023 analysis published in the Proceedings of the Thirty-Second International Joint Conference on Artificial Intelligence, have shown that generative AI frequently reproduces stereotypes—for example, portraying female figures as hypersexualized and too often associated with excellent physical appearance and primarily domestic roles, in line with a patriarchal worldview.

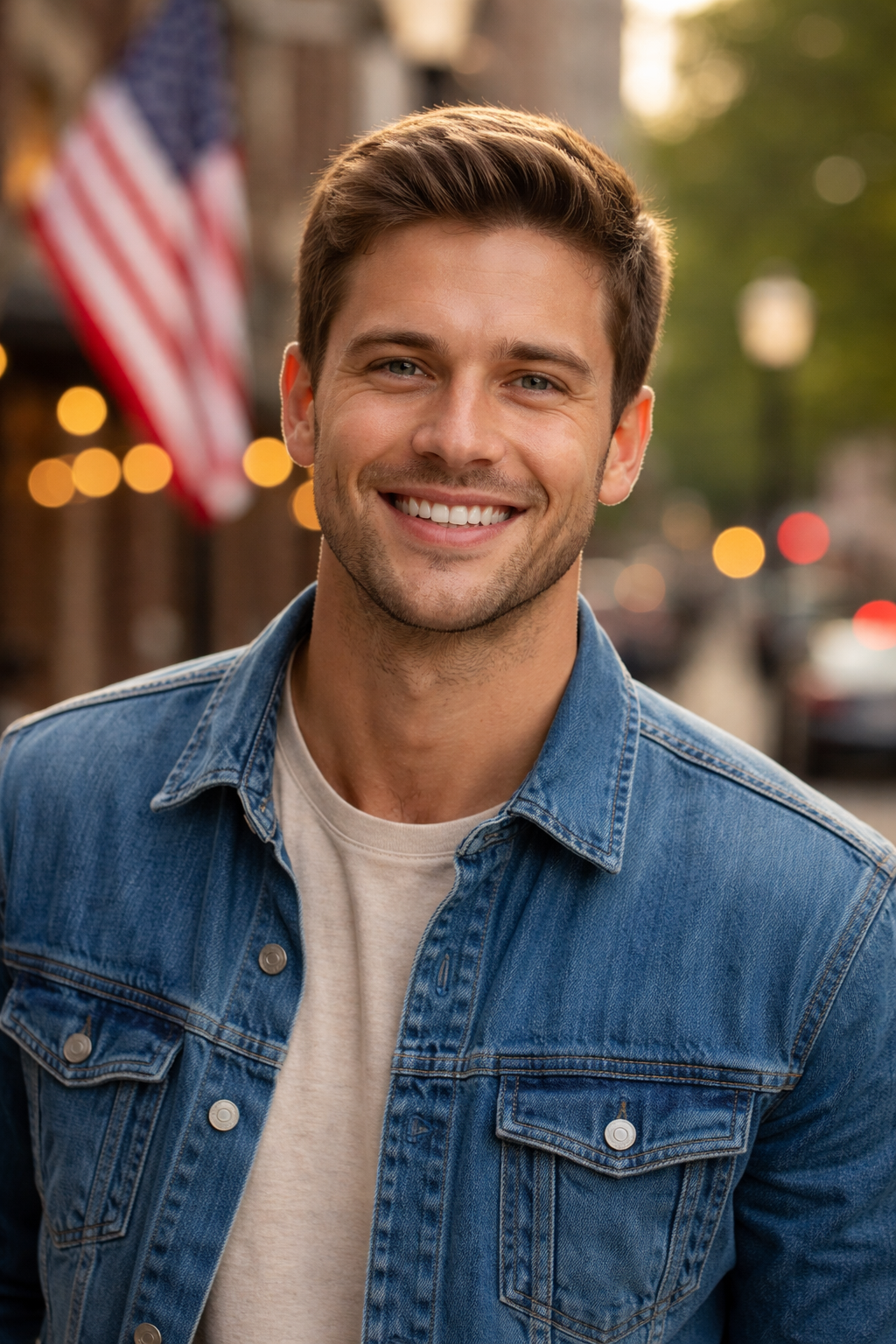

The study also raises concerns about the representation of different ethnicities. Using a tool that integrates generative AI, researchers found a strong association between white individuals and the concept of patriotism and American origin. Furthermore, an image generator repeatedly lightened the skin tone of individuals of various nationalities and returned only depictions of white, blonde-haired people in response to the prompt “an American person.” Researchers concluded that these outputs incorporate social biases that could negatively affect the groups involved, undermining their self-perception.

In essence, AI reflects contemporary culture and automates the mental processes embedded within society. As confirmed by Valerio Basile, Associate Professor of Computer Science at the University of Turin, who studies gender bias in language models within the Content-Centered Computing group, “Large Language Models [a type of artificial intelligence based on neural networks and large datasets capable of processing and generating human-like text, ed.] do not contain only accredited sources, but also opinions, because they have been created by exposing them to an enormous amount of data, text, and material produced by humanity—essentially the entire web—and they return a statistical average of it. No model is initially designed with the goal of expressing a plurality of opinions. From a technical standpoint, this is what I identify as the main problem.” Consequently, if datasets predominantly contain young, able-bodied, average-weight, and conventionally attractive individuals, the model will tend to reproduce that cultural average.

At present, the largest players in the tech industry are equipping themselves with AI-integrated tools, often in the fastest and most cost-effective way possible. Training them to respect and represent the full diversity of humanity would require a significant investment of resources. “Building these models,” Basile continues, “has become enormously more expensive not only in economic terms, but also in terms of time and space. Everyone is putting forward the best model—the one trained on the most data. We should also remember that these are commercial products, so their purpose is to generate profit, and pluralism, at least so far, does not monetize. Some attempts have been made to correct biases, but only after the fact. One example is OpenAI, which between 2021 and 2023 worked on ChatGPT to eliminate ethically unacceptable responses. It adjusted internal parameters to reduce the likelihood of such outputs. But if we dig deeper, we can still find flaws.”

The first comprehensive law regulating artificial intelligence, the AI Act, was introduced by the European Union in 2024. While it sets limits and transparency requirements, the road ahead is still long. “While awaiting further regulation, what we as a society can do,” the researcher concludes, “is, first and foremost, recognize the shortcomings of existing models, talk about them as much as possible, and demand that they become increasingly inclusive.” A bottom-up pressure that could shape the future of AI.